The democratization of digital media has empowered millions to become storytellers, yet the acoustic dimension of content remains a significant hurdle for those without formal musical training. Professional orchestration has historically been a gatekept skill, requiring expensive instruments and years of technical study. However, the emergence of the AI Music Generator is fundamentally reconfiguring this creative hierarchy by providing an intuitive bridge between conceptual imagination and studio-quality sound. By utilizing generative neural networks to handle the complexities of music theory and sound engineering, the platform enables creators to produce original compositions that resonate with their specific audience. This shift moves the creative focus away from technical execution and toward strategic curation, allowing for a more fluid and efficient production lifecycle.

The Architectural Logic Of Modern Generative Music Platforms

To understand how these systems function, one must look past the simple interface to the underlying synthesis engine. These models are built on transformer-based architectures that have been trained to recognize patterns across millions of measures of music. When a user provides a directive, the system isn’t simply rearranging pre-recorded loops; it is calculating the most probable next note, chord, and frequency to satisfy the requested stylistic constraints. In my testing, this results in a high degree of structural coherence that mimics the deliberate intent of a human composer.

Synthesizing Emotional Atmosphere Through High Dimensional Data

The most impressive aspect of current audio AI is its ability to interpret abstract emotional descriptors. In Text to Music systems, words like “ethereal,” “gritty,” or “triumphant” are mapped to specific acoustic markers such as reverb density, harmonic distortion, and melodic intervals. This linguistic mapping allows users to bypass the technical jargon of music production and communicate their needs in the language of storytelling. My observation is that this creates a much more direct path to the desired “vibe” of a project than traditional library searching.

Addressing The Consistency And Refinement Challenges In AI Audio

Despite the rapid acceleration of this technology, it is important to note that AI is an iterative partner rather than a flawless oracle. Occasionally, the generated audio may include artifacts or unexpected melodic shifts that require the user to regenerate or refine their prompt. Achieving a perfect “radio-ready” track often involves a trial-and-error process where the creator fine-tunes the balance between different genre tags. This level of human oversight remains essential to ensure the final product aligns with professional standards.

Operational Roadmap For Creating Custom Soundtracks

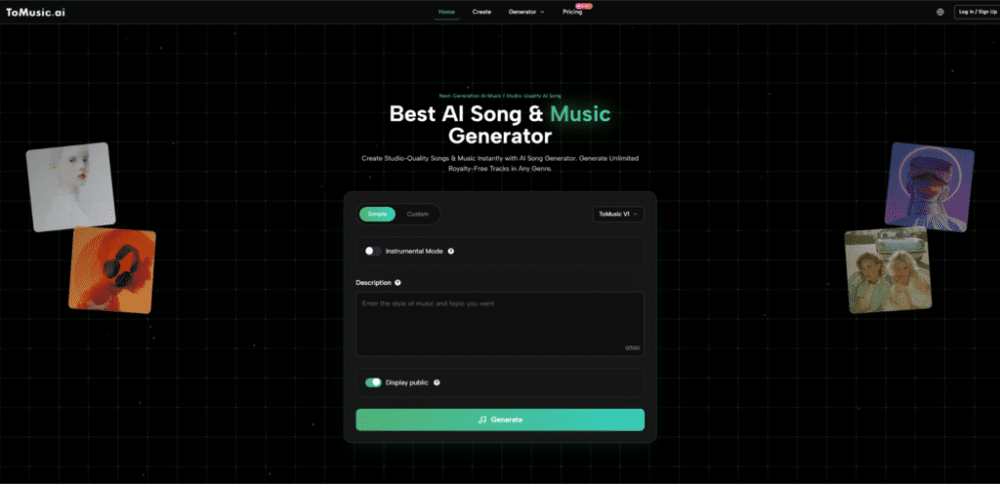

The platform’s workflow is engineered to be linear and non-intimidating, ensuring that even first-time users can navigate the production process. According to the official functional layout, the generation follows these precise steps:

Construct The Stylistic Blueprint And Thematic Foundation

The journey begins in the creation suite where you define the core identity of the track. You can write a detailed narrative prompt or select from a library of curated mood and genre presets. This initial input acts as the primary constraint for the AI, dictating the tempo, scale, and instrumentation that will be used to build the musical arrangement from the ground up.

Specify Structural Parameters And Vocal Integration Requirements

Once the style is established, you move to the configuration stage to define the physical attributes of the audio file. This involves setting the exact duration of the piece and deciding whether to include synthetic vocals. If a vocal track is requested, the system uses advanced singing synthesis to match the lyrics to the generated melody, ensuring a seamless blend between the human-like performance and the digital backing track.

Initiate Synthesis And Finalize The High Definition Export

The final phase involves triggering the cloud-based rendering engine, which typically produces a full preview within a minute. After the track is generated, you can listen to the composition to verify its quality and structural flow. If the result meets your requirements, you can proceed to download the final master in high-bitrate formats, making it ready for immediate use in any professional editing environment or broadcast platform.

Quantitative Comparison Between AI Synthesis And Static Audio Libraries

For organizations looking to optimize their asset procurement, it is vital to compare the utility of generative tools against traditional stock audio solutions.

| Performance Metric | Traditional Audio Libraries | AI Music Generator |

| Customization Depth | Fixed and Static | Dynamic and Adjustable |

| Discovery Friction | High (Manual Searching) | Low (Prompt-based Creation) |

| Brand Exclusivity | Very Low (Non-exclusive) | High (Unique Synthesis) |

| Content Synchronization | Manual Editing Required | Automated to Specific Length |

| Audio Quality | Variable (Source Dependent) | Consistent (Model Dependent) |

Broadening The Scope Of Application Within The Creator Economy

The utility of automated music generation extends far beyond simple background tracks, offering new possibilities for interactive and personalized media experiences.

Scaling Podcast Production With Custom Intro And Outro Themes

Podcast producers often struggle to find a signature sound that hasn’t already been used by other shows. By using a generative tool, a producer can create a unique sonic brand that evolves over time while maintaining a consistent thematic core. In my observation, this ability to generate variations of a theme song helps in keeping the production value high across hundreds of episodes without the need for constant reinvestment in new music.

Facilitating Rapid Prototyping For Agency Level Video Campaigns

In the fast-moving world of digital advertising, waiting for a custom score can delay a campaign launch by weeks. Agencies can now use generative AI to create high-fidelity soundtracks for client pitches or social media ads in real-time. This allows for a much more agile feedback loop where the music can be adjusted alongside the visual edits, ensuring a perfectly synchronized final product that meets the specific emotional beats of the script.

The Future Of Collaborative Creativity In The Age Of Intelligence

As we look toward the next phase of media evolution, the distinction between “human-made” and “AI-assisted” is becoming less relevant than the quality of the final experience. The true power of these tools lies in their ability to act as a force multiplier for human taste. By removing the technical barriers to entry, we are entering an era where the most valuable skill is no longer the ability to play an instrument, but the ability to imagine a sound and direct a machine to bring it into existence.