Azure AI workloads grew 39% year-over-year in 2025. 80% of Fortune 500 companies are already running on Azure AI Foundry. The technology is proven, the investment is there – and yet McKinsey data shows only one in three companies actually scales AI to real business value.

So what’s breaking?

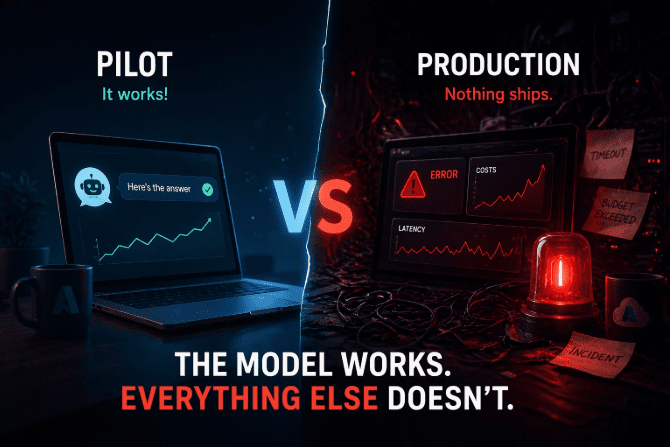

The pilot trap

A team picks a use case, spins up an Azure OpenAI instance, gets solid results in a sandbox, and presents a working demo. Leadership approves a production rollout. Then six months pass and nothing ships.

The model isn’t the problem. The model was fine from day one.

What breaks is everything around it – the infrastructure, the deployment process, the cost controls, the monitoring. The things that felt secondary during the pilot become the actual bottleneck in production.

Regular software projects don’t work this way. The experimentation phase is fast and forgiving. The path to production is where the real complexity lives – and where Azure AI consulting tends to have the most impact, because the problems are predictable and the solutions are already known.

What “everything around it” actually means

Environments that don’t behave consistently. A model tuned in a dev environment often behaves differently under real traffic patterns. Without reproducible infrastructure – think Bicep or Terraform managed through Azure DevOps – every deployment is a manual process with manual risk.

Costs that appear out of nowhere. Token sprawl turned a lot of 2025 AI pilots into budget emergencies. Without proper tagging, cost alerts, and governance built into the architecture from the start, cloud spend becomes unpredictable fast. Azure Cost Management helps – but only if someone sets it up intentionally.

No visibility into what the model is actually doing. Azure Monitor and Application Insights aren’t glamorous, but they’re what separates a system you can operate from one you’re just hoping works. Teams that skip observability early spend weeks in reactive mode later.

Scaling that breaks the architecture. What handles 100 requests a day often falls apart at 10,000. And the painful discovery usually happens after launch, not before – because no one stress-tested the pipeline end to end.

The shift that changes outcomes

Companies that get past the pilot stage tend to share one mindset: they treat Azure AI as infrastructure, not a product feature. That means CI/CD pipelines for model delivery, not manual deployments. It means choosing between Azure OpenAI Service, AI Foundry, and custom ML pipelines based on actual load and latency requirements – not just what was easiest to prototype with.

It also usually means bringing in people who’ve seen this pattern before. The teams that ship fastest aren’t the ones who figure it out themselves – they’re the ones who don’t spend three months rediscovering problems that already have solutions.

What it looks like when it works

The teams that get this right don’t seem to move faster during the pilot – they actually move slower, more deliberately. They spend time on things that feel unexciting: defining environments properly, setting up cost tagging before the first real workload, building monitoring before anyone asks for it.

Then, when it’s time to scale, there’s nothing to untangle. Releases go out without the team holding their breath. A spike in usage doesn’t trigger a budget crisis. When something degrades, someone knows within minutes – not days.

It’s not magic. It’s just what happens when infrastructure is treated as part of the product from the beginning, not scaffolding to be replaced later.

The honest starting point

Before thinking about which Azure services to use, answer three questions:

- What does the path from experiment to production actually look like on your team right now?

- Do you know what your AI infrastructure costs per inference, per day, per user?

- If the model degrades next week, how long before someone notices?

If the answers are vague, that’s where to start – not with the model.

Bottom line

The reason most Azure AI projects stall isn’t technical. It’s that the pilot phase rewards speed and ignores everything that makes a system production-ready. The teams that close that gap fastest are the ones who treat infrastructure, observability, and cost governance as first-class concerns – not cleanup tasks for later.

The model works. The question is whether everything around it does too. If you’re not sure – visit Alpacked and see how teams that have been through this think about it.